Stream

Thoughts on Conversational Design – 2024

– Updated: – publicWhile at UX Brighton, Lorraine Burrell kindly invited me to The AI Meet-up South - Conversational AI Edition event. The theme of the event was reflections on 2024. There were three great talks focused on chatbots and the world of conversational product design. As with many of these events, the discussion afterward was also just as enlightening. I wanted to highlight four themes or reflections that interested me.

Questions and answers, not helping people with tasks.

This manifests in two key points. Current product and feature designs are often too focused on Q&A, yet humans naturally mix discovery questions with task-oriented goals. Many products seem to lack a sense of joint action and journey planning needed to focus on positive task outcomes in conversations. Directional repair is missing in many current chat experiences—that is, guiding users through a series of discovery questions and then helping them take action. Too often, these are empty calorie conversations.The cost of flexibility is always control.

As teams move from rule-based systems for managing conversations to LLM-powered conversational elements, there’s a noticeable loss of fine-grain control over design. This often shows up product team saying LLMs are not terse enough, overly polite, or too generalist in their responses. The language used by LLMs rarely matches the brand voice of the deploying company and fails to adjust as the context of the conversation evolves. It’s been fascinating to hear real examples of the benefits of out-of-the-box LLM responses, but also how these outputs are watering down the stylistic standards carefully put in place by conversational designers.If RAG does not work.

If your RAG implementation isn’t working, it’s more likely an issue with content design or informational transformation than something a new technical layer can fix. For instance, dumping a website into RAG often destroys its usefulness. Even high-quality web content loses impact when stripped from its original visual and informational architecture and forced to function in an entirely different medium and context. The lesson here is that it’s not about whether LLM or RAG is “bad”; it’s about where, how, and whether these tools are truly needed for solving your users’ problems.Solutionizing chat is so 2024.

One major issue with AI chat interfaces, as popularized by OpenAI, is that they give the facade of substantial utility but rarely deliver in real-world use cases. This year, that problem seems to have driven a wave of “solutionizing innovation” projects in many corporations. There’s a lot of anecdotal evidence across the tech and design industries to support this. I believe this trend will be short-lived, and in 2025, user need-driven product design will push back. OpenAI’s own shift to focusing its products on specific use cases—like writing and coding through the canvas interface—is an early sign of this shift.

Also enjoyed a conversation I had with Kane Simms about the use of ML models to predict content for a user’s base on the history of their navigational path.

UX Brighton 2024

– Updated: – publicMassively enjoyed UX Brighton this year! All the talks were really insightful with interesting threads and themes weaving through the whole day. Thanks to the other speakers: Will Taylor, Hannah Beresford, Philip Bonhard, Pablo Stanley, Kwame Ferreira Maggie Appleton, Manú Bartlett and Ewa Koc. Maggie's talk on "Expanding Dark Forest" gave me a lot to think about.

Giving a talk on the impact of ML on design was a challenge — it's such a complex and evolving topic, and it's hard to get the balance right. It feels like we are all still at the exploring and learning stage. It's also quite emotive, where possible impacts of change are never far from the surface.

Being a speaker at a large conference is always a privilege; it's like speed networking. It breaks down the barriers, where people talk to you in a deeper way. You get to hear about their passions and experiences.

Books that informed the talk:

How we talk, by N J Enfield

Conversation With Things by Diana Deibel and Rebecca Evanhoe

Conversational Design by Erika Hall

The slide deck from my talk: Human Conversations with Grids of Numbers.pdf

Friday 1 November 2024

The Dome, Brighton

Talk at UX Edinburgh

– Updated: – publicI had a great time giving a talk at UX Edinburgh. Many thanks to our host Nile, whose office space must be located on one of the most picturesque streets in Edinburgh. Neil Collman, the Design Director, shared some insightful research on users' thinking about AI. I gave my talk, titled "Human Conversations with Grids of Numbers.

The Q&A and discussion afterwards were really rewarding, with lots of interesting questions. In particular, I enjoyed the discussion about how quality conversational exchanges in digital experiences can impact levels of trust.

There was a great quote from Kay Cochrane

Glenn’s points on changing the mechanisms of interaction, proposing responsive UX as well as UI and considering where GenAI takes visual design is equally pure excitement and totally terrifying.

I'd like to thank Ricky Callaghan and Mike Jefferson for organizing the event.

The slide deck from my talk: Human Conversations with Grids of Numbers.pdf

Thursday, October 24, 2024

Nile HQ - 13-15 Circus Lane · Edinburgh

Talking at UX Brighton 2024

– Updated: – publicI'm incredibly excited to be speaking at UX Brighton 2024 in November! This year's conference theme, "What do UX designers, researchers, and managers need to know about AI?", perfectly aligns with my interests. I'm developing a talk about how the new generation of ML will reshape user interface patterns.

UX Brighton 2024 - UX & AI

Friday 1 November, Brighton Dome

https://uxbri.org/

After brainstorming with event organizer Danny Hope, this is talk title and description I am going with:

Human conversations with grids of numbers

The rise of large language models signals a new era in machine learning. Yet, these grids of numbers, while impressive, offer a faltering facade of true human communication. Past research reminds us that conversation is a beautiful, chaotic, and profoundly human tool that resists easy replication.

However, there is a seed in generative AI of something to come: tools that can generate individualised and ethereal interactions. Emerging reasoning capabilities that can interact with the experiences we have built for humans, which act as agents on our behalf. These systems hold the promise of eventually working in collaboration with us, through our greatest tool – spoken and written language.

In this talk Glenn will explore possible AI driven user experiences. What will be the place for graphical interfaces in a world of conversational AI? Will chat UI design patterns prove to be just a passing adolescent phase – something we’ll all soon look back on with a wry smile.

How We Talk by N. J. Enfield

– Updated: – publicThis book offers a thought-provoking introduction to the mechanics of conversation. It creates a forceful case for the importance of timing, repair, and the procedural utterances we use to construct successful conversations. Enfield methodically unveils the hidden and unconscious scaffolding we use as we talk to each other. Who knew "Huh?" was so important an element in spoken language? It is well-laid-out and easy to read.

A small gripe is that the academic rigor of laying bare all the supporting points sometimes gets in the way of the fascinating insights from the research. If you are at all interested in the field of conversation design it is well worth the read.

Published: Nov 2017

ISBN : 978-0465059942

https://www.hachettebookgroup.com/titles/n-j-enfield/how-we-talk/9780465059942/?lens=basic-books

Reading notes:

A thread through the book is that standard linguistic theory is focused only on written forms of language. Ruling out a lot of the elements of real conversation with all its errors, corrections and collaborative flow controls. Enfield tasks himself with correcting this.

“Linguistic theory is concern primarily with an ideal speaker-listener…”

Conversation Has Rules

The second chapter goes well beyond the concept of 'politeness' often used to describe the rules of conversation.

“By definition, joint action introduces rights and duties. As Gilbert says … each person (in a conversation) has a right to the other’s attention and corrective action. Each person has a moral duty to ensure they are doing their part.”

Entering into a conversation is entering a contract for collaborative joint action, with well-defined rules and roles. The contract lays out many corrective measures to repair and realign a conversation if a party believes the other has strayed. I thought the examples of how people correct each other were fascinating. They border into areas of control and power in relationships of those talking.

This contract with others to allow them to correct us could cause a profound point of conflict for conversational interfaces between humans and AI, once AI can achieve the ability to join a turn-taking conversation.

Split-Second Timing

The third chapter looks at the importance of timing. It slowly builds a picture that timing is not just an end product of the thought process but is used to convey meaning and help us structure the conversational flow.

A study of Dutch found that 40% of turn-taking transitions in conversation occurred within a 200ms window on either side of zero, with 85% occurring within 750ms of zero. Similar results were found in studies of English and German.

The time to form a conservational response “from intention to articulation”

175ms. Retrieve concepts

75ms Concepts to words

80ms Words to sounds – phonological codes

125ms Forming the sounds into syllables

145ms Executing the motor program to pronounce the words

600ms Total

If the typical turn-taking gap in English is 200ms, then people are starting to form a response well in advance of the last speaker finishing. There is a measurable percentage of people overlapping the end of the last turn, although the total time two people are speaking is relatively small at 3.8%, meaning even overlaps are well-timed.

“In their 1974 paper on the rules of turn-taking, Sacks and colleagues identified this ability to tell in advance when a current speaker would finish, referring to the skill as projection.”

Among other signals, we use both pitch and the length of the last syllable to signal to others that the end of a turn is coming, i.e., prosody.

“…study shows that the signals for turn ending combine serval features of sound of utterances, as well as the grammatical structure of the utterance. … the fact that grammar alone cannot be sufficient”

The One-Second Window

The preferred and dispreferred responses to the last turn have different time gaps. A preferred response to the question “Can you come out tonight?” would be "yes" or "no" and would be answered promptly. A dispreferred response could be “I don’t know, I will have to check my calendar.” These dispreferred responses are typically in the late zone, at about 750ms.

“In nearly half the dispreferred response, the first sound one hears is not a word at all, but an inbreath (or click, that is a “tut” or “tsk” sound)."

“…people are now able to manipulate timing to send social signals about how a response is being packaged.”

“…also see “well” ad “um” playing a role in packaging and postponing certain kinds of response in conversation.”

The Traffic Signals

The chapter covers the use of “um” and “uh” in great detail. It concludes that these little words are part of the conversational machine, allowing us to signal a brief delay and forestall the handing over of a turn due to a longer gap than usual. These signals are very frequent, with men making use of them every 50 words and women every 80 words.

“In written language, the reader does not directly witness the act of production.”

Transcripts are often cleaned up, removing inevitable problems with the choice of words, pronunciation, and content. With conversation, this process of production is visible.

“The use of these little traffic signals such as “uh/um”, “uh-huh” and “okay” all illustrate ways in which bits of language are used for regulating language use itself.”

They are the procedural directional instructions of conversation. They form part of the joint commitment to a conversation.

Repair

On average, we need to repair an informal conversation every 84 seconds. These repairs are a normal part of our conversations, and we have ways of signaling a correction, much like we do for a small delay. Our ability to do this is what keeps a conversation on track and moving at speed.

“Hardly a minute goes by without some kind of hitch: a mishearing, wrong word, poor phrasing, a name not recognized.”

Conversation With Things by Diana Deibel and Rebecca Evanhoe

– Updated: – publicConversation With Things is a fascinating journey into the world of conversational design. Authors Diana Deibel and Rebecca Evanhoe have gone to great lengths to produce the introduction they wish they'd had when they started. Two chapters, 'Talking like a Person' and 'Complex Conversations', truly demonstrate an understanding of the subject and convey the feeling that they are grounded in many years of practice and analysis.

Although the book was written before generative AI burst into the world, it remains relevant. The chapters on defining intents and documenting conversational pathways could easily be seen now as methods of evaluation and testing for human alignment with LLM-based conversational tools. I hope the authors will one day consider a second edition that encompasses practices in the age of generative AI.

ISBN: 978-1933820-26-2

Published: April 2021

https://rosenfeldmedia.com/books/conversations-with-things/

Reading notes:

Taking Like a Person

The second chapter, titled "Talking Like a Person," explores various layers of complexity in human conversation. These themes interweave like the conversation structures they describe:

Conversation is co-created: Participants collaborate to achieve a shared goal or outcome.

Prosody and intonation are fundamental to spoken language, forming part of its structure rather than merely being add-ons in dialogue construction.

Turn-taking is the interplay through which conversation forms, encapsulating power structures and much more than its mechanics initially suggest.

Conversation unfolds in a messy manner; it is structured but not always in a formal exchange of turn-taking.

Repair: We are constantly repairing our conversations, bringing them back to a point where the process of co-creation works. According to Nick Enfield, this occurs approximately every 84 seconds when two people speak.

Accommodation involves a chain reaction of adjustments in response to each other and the situation during conversation.

Mirroring, or "limbic synchrony," entails matching posture, expressions, and gestures, as well as speech elements like pace, vocabulary, pronunciation, and accents; this process is called convergence.

Code-switching requires presenting different identities to elicit an outcome.

Politeness goes beyond a list of social constraints such as not licking a bowl in a restaurant; in conversation, it serves as a contract between parties. When considered alongside the concept of repairing, it leads to more dynamic and fluid ideas, as expressed by Onuigbo G. Nwoye:

"It’s a series of verbal strategies for keeping social interactions friction-free."

The authors regard Grice's Maxims as a somewhat simplistic foundation for conversation, covering cooperative principles but missing some important elements of conversational theory and design addressed in the points above.

The Rest of the Book

I read this book as part of my research into linguistic user interfaces being built around LLMs and AI chat. While other chapters in the book contain a wealth of valuable material, I am only pulling out a few subjects that have specific interest to me at this time.

Common question types

In the section dealing with scripted flows, the author identifies some of the most common question types posed to users. The book outlines six common question types which form the construction of turn-taking in older conversational tools:

Open-ended

Menu

Yes-or-no

Location

Quantifying

Instructional

There are a couple of useful UI elements that work with these questions: a conformational component and repeating request.

Cognitive load with task order, lists, and prosody

Ordering tasks is crucial for reducing cognitive load. This seems to be most related to the sequencing of instructions.

Spoken lists significantly increase cognitive load for users. The authors delve into detail on reducing complexity to enhance recall, which greatly impacts menu structures.

The absence of prosody in the simple conversion of written text to TTS (Text-to-Speech) significantly increases cognitive load. In the past, SSML (Speech Synthesis Markup Language) has been utilized and text has been scripted in a dialogue style.

"Human conversation is multimodal"

Human conversation is multimodal. This simple statement was one of the strongest messages I took away from the book. We operate by blending all our senses simultaneously to facilitate conversation flow. We employ visual body language along with prosody and the content of our words to communicate effectively.

Lisa Falkson of the Alexa team found that when users are presented with visual and audio information together, they often mute the audio to focus on the visual information.

You can still utilize visual and audio information if you are employing strong visualization reinforced by audio. Lisa uses Alexa’s weather as an example. If you do this, the elements need to be synced. Lisa refers to this as the "temporal binding window" of 400 milliseconds.

Follow up links and reading:

https://www.cambridge.org/core/books/using-language/4E7EBC4EC742C26436F6CF187C43F239

https://www.researchgate.net/publication/231870679

https://onlinelibrary.wiley.com/doi/book/10.1002/9781118247273

https://en.wikipedia.org/wiki/Turn-taking

https://en.wikipedia.org/wiki/Conversation_analysis

https://www.hachettebookgroup.com/titles/n-j-enfield/how-we-talk/9780465059942/?lens=basic-books

Conversational Design by Erika Hall

– Updated: – publicConversational Design could have been a straightforward view on the emergence of voice interfaces such as Alexa or the UI of customer service bots that live in the bottom right-hand corner of many sites. Instead, Erika Hall explores the rich possibilities of conversational language in UX from a more holistic and inquisitive standpoint. It's a much better book because of that.

ISBN: 978-1-952616-30-3

Published: March 6, 2018

https://abookapart.com/products/conversational-design

Reading notes:

The Human Interface

The first section of the book really sings with its take on the history of spoken language at the centre of human interaction. Burned in my mind are two important insights it brings to the forefront.

In oral culture – “All knowledge is social and lives in memory”

A written culture – “Promotes authority and ownership”

“These conditions may seem strange to us now (oral culture). Yet, viewed from a small distance, they’re our default state. Because our present dominate culture and the technology that defines it depends upon advanced literacy, we’ve become ignorant of the depths of our legacy and blind to the signs of its persistence.”

The contrast in power dynamics is pronounced, transitioning from the shared ownership of knowledge in oral traditions to the individual possession we see today. Our current written and digital culture is held to together with concepts of individualism, intellectual property, single sources of authority and ownership at its heart.

The book outlines the key material properties of oral culture as:

Spoken words are events that exist in time.

It’s impossible to step back and examine a spoken word or phrase. While the speaker can try to repeat, there’s no way to capture or replay an utterance.All knowledge is social and lives in memory.

Formulas and patterns are essential to transmitting and retaining knowledge. When the knowledge stops being interesting to the audience, it stops existing.Individuals need to be present to exchange knowledge or communicate.

All communication is participatory and immediate. The speaker can adjust the message to the context. Conversation, contention, and struggle help to retain this new knowledge.The community owns knowledge, not the individuals.

Everyone draws on the same themes, so not only is originality not helpful, it is nonsensical to claim an idea as your ownThere are no dictionaries or authoritative sources.

The right use of a word is determined by how it’s being used right now

I am now reading Walter Ong “In Orality and Literacy: The Technologizing of the Word” which is reference in this part of the book.

Principles of Conversational Design

The 'Principles of Conversational Design' chapter begins by defining an interface as 'a boundary across which two systems exchange information.' It quickly moves to argue that conversation is the original interface and remains the most widely understood and utilized.

Hall then explores the language philosopher Paul Grice's work on the four conversational maxims, and Robin Lakoff's work on 'The Logic of Politeness,' which introduces a fifth maxim.

The conversational maxims are the cooperative foundation or rules by which humans communicate effectively through conversation, referred to by Grice as the 'Cooperative Principle.

The conversational maxims

Maxim of Quantity: Information

Make your contribution as informative as is required for the current purposes of the exchange.

Do not make your contribution more informative than is required.

Maxim of Quality: Truth (supermaxim: "Try to make your contribution one that is true")

Do not say what you believe to be false.

Do not say that for which you lack adequate evidence.

Maxim of Relation: Relevance

Be relevant.

Maxim of Manner: Clarity (supermaxim: "Be perspicuous")

Avoid obscurity of expression.

Avoid ambiguity.

Be brief (avoid prolixity).

Be orderly.

Maxim of Politeness (Robin Lakoff)

Don’t impose

Give options

Make the listener feel good

The Rest of the Book

The remainder of the book explores the practice of implementing Conversational Design within UX. It is well-written and contains a wealth of valuable material. At this point in time, my interest lies in researching the new linguistic user interfaces being built around LLMs (large language models) and AI chat. The first half of the book delivered that for me.

There are a number of points to extract from the rest of the book:

When confronting a new system, the potential user will have these unspoken questions.

Who are you?

What can you do for me?

Why should I care?

How should I feel about you?

What do you want me to do?”

The last questions “What do you want me to do?”. is reflected on later in the book by quoting Jim Kalbach’s work with navigation

Expectation setting: “Will I find what I need here?”

Orientation: “Where am I in this site?”

Topic switching: “I want to start over”

Reminding: “My session got interrupted. What was I doing?”

Boundaries: “What is the scope of this site”

Follow up links and reading:

https://abookapart.com/products/conversational-design

https://en.wikipedia.org/wiki/Walter_J._Ong

https://en.wikipedia.org/wiki/Paul_Grice

https://en.wikipedia.org/wiki/Robin_Lakoff

https://en.wikipedia.org/wiki/Cooperative_principle

https://experiencinginformation.com/

Edinburgh JS talk - Text Wrangling and Machine Learning

– Updated: – publicTalk description

More organisations are looking to automate tasks and gain insights from large amounts of text. JavaScript is a powerful language for text wrangling and machine learning, allowing developers to quickly and easily manipulate text data. We will look at different approaches from rules-based parsing to neural nets.

Exploring what JavaScript frameworks, workflows and processes are available to build real world apps. This is a gentle introduction which should be interesting and useful to all JavaScript coders.

Video

Video of the talk: Starts at 46:30

Slides

The slides for the talk can be found in github repo slides.pdf

Code and examples

To view and play with the notebooks you will need to install the https://marketplace.visualstudio.com/items?itemName=donjayamanne.typescript-notebook plugin for Visual Code. Note: without Visual Code and the typescript-notebook plugin the notebook files .nnb will just be displayed as JSON data. It is also worth installing the Jupiter Notebooks plugin into Visual Code.

Once you have done the above then clone the git repo onto your local machine and do a npm install in the cloned directory Open the project in Visual Code and you should be able to play with the examples in the note books.

Prompt

The prompt tool demo is in a public repo on https://github.com/glennjones/prompt - It well worth looking at the Langchain JS which is the most heavily used tool of this type at the moment (Apr 2023) Its more advanced and has a lot of additional/useful features my prompt tool does not have.

Hosting multiple node sites on Scaleway with Nginx and Lets Encrypt

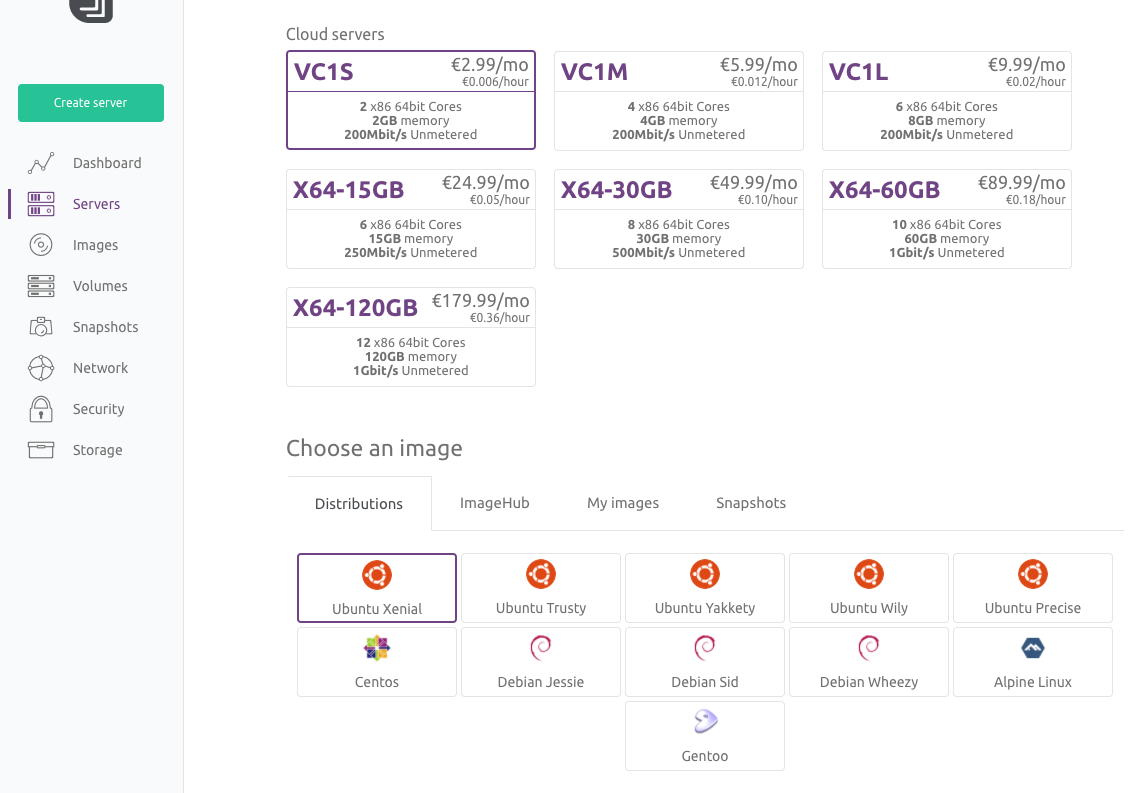

– Updated: – publicTwo years ago I wrote a blog post about hosting Node.js servers on Scaleway. I have now started using Nginx to allow me to host multiple sites on one €2.99 a month server.

This tutorial will help you build a multi site hosting environment for Node.js servers. We are going to use a pre-built Ubuntu server image from Scaleway and configure Nginx as a proxy. SSL support will be added by using the free letsencrypt service. I have written this post to remind myself, but hopefully it will be useful to others.

Setting up the account

- Create an account on scaleway.com – you will need a credit card.

- Create and enable SSH Keys on your local computer, scaleway have provided a good tutorial https://www.scaleway.com/docs/configure-new-ssh-key/. It's easier than it first sounds.

Setting up the server

Scaleway provides a number of server images ready for you to use. There is Node.js image but we will use the latest Ubuntu image and add Node.js later. At the moment I am using VC1S servers which is a dual core x86.

- Log into the Scaleway dashboard and click “Create server”

- Select the VC1S server and the latest Ubuntu image currently Xenial.

- Finally click the "Create Server" button.

- Within the scaleway dashboard navigate to the "Servers" tab and click "Create Server".

- Give the server a name.

- Select the VC1S server and the latest Ubuntu image currently Xenial.

- Finally click the "Create Server" button.

It takes a couple of minutes to build a barebones Ubuntu server for you.

Logging onto your Ubuntu server with SSH

- Once the server is setup you will be presented with a settings page. Copy the "Public IP" address.

- In a terminal window log into the remote server using SSH replacing the IP address in the examples below

with your "Public IP" address.

If, for any reason you changed the SSH key name from id_rsa remember to provide the path to it.$ ssh root@212.47.246.30$ ssh root@212.47.246.30 -i /Users/username/.ssh/scaleway_rsa.pub

Installing the Node.js

We first need to get the Node.js servers working. The Ubuntu OS does not have all the software we need so we start by installing Git, Node.js and PM2.

- Install Git onto the server - helpful for cloning Github repo's

$ apt-get install git - Install Node.js - you can find different version options at github.com/nodesource/distributions

curl -sL https://deb.nodesource.com/setup_6.x | sudo -E bash - sudo apt-get install -y nodejs - Install PM2 - this will run the Node.js apps for us

$ npm install pm2 -g

Creating the test Node.js servers

We need to create two small test servers to check the Nginx configuration is correct.- Move into the top most directory in the server and create an apps directory with two child directories.

$ cd / $ md apps $ cd /apps $ md app1 $ md app2 - Within each of the child directories create an app.js file and add the following code. IMPORTANT NOTE:

in the app2 directory the port should be set to 3001

const http = require("http"); const port = 3000; //3000 for app1 and 3001 for app2 const hostname = '0.0.0.0' http.createServer(function(reqst, resp) { resp.writeHead(200, {'Content-Type': 'text/plain'}); resp.end('Hello World! ' + port); }).listen(port,hostname); console.log('Load on: ' + hostname + ':' + port);

NOTE You can use command line tools like VIM to create and edit files on your remote server, but I like to use Transmit which supports SFTP and can be used to view and edit files on your remote server. I use Transmit "Open with" feature to edit remote files in VS Code on my local machine.

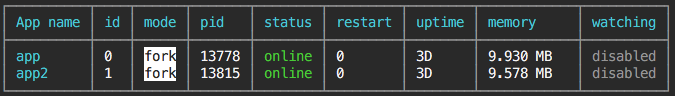

Running the Node.js servers

Rather than running Node directly we will use PM2. It has two major advantages to running Node.js directly, first is PM2 daemon that keeps your app alive forever, reloading it when required. The second is that PM2 will manage Node's cluster features, running a Node instance on multiple cores, bringing them together to act as one service.

Within each of the app directories run

$ pm2 start app.js

The PM2 cheatsheet is useful to find other commands.

Once you have started both apps you can check they are running correctly by using the following command:

$ pm2 list

At this point your Node.js servers should be visible to the world. Try http://x.x.x.x:3000 and http://x.x.x.x:3001 in your web browser, replacing x.x.x.x with your servers public IP address.

Installing and configuring Nginx

At this stage we need to register our web domains to using the public IP address provided for the Scaleway server. For this blog post I am going to use the examples alpha.glennjones.net and beta.glennjones.net

Install Nginx

$ apt-get install nginx

Once installed find the file ./etc/nginx/sites-available/default on the remote server and change its contents to match the code below. Swap out the server_name to match the domain names you wish to use.

server {

server_name alpha.glennjones.net;

location / {

# Proxy_pass configuration

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header Host $http_host;

proxy_set_header X-NginX-Proxy true;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection "upgrade";

proxy_max_temp_file_size 0;

proxy_pass http://0.0.0.0:3000;

proxy_redirect off;

proxy_read_timeout 240s;

}

}

server {

server_name beta.glennjones.net;

location / {

# Proxy_pass configuration

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header Host $http_host;

proxy_set_header X-NginX-Proxy true;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection "upgrade";

proxy_max_temp_file_size 0;

proxy_pass http://0.0.0.0:3001;

proxy_redirect off;

proxy_read_timeout 240s;

}

}

Test that Nginx config has no errors by running:

$ nginx -t

Then start the Nginx proxy with:

$ systemctl start nginx

$ systemctl enable nginx

Nginx should now proxy your domains so in my case both http://alpha.glennjones.net and http://beta.glennjones.net would display the Hello world page of apps 1 and 2.

Install letsencrypt and enforcing SSL

We are going to install letsencrypt and enforcing SSL using Nginx rather than Node.js.

-

We start by installing letsencrypt:

$ apt-get install letsencrypt -

We need to stop Nginx while we configure letsencrypt:

$ systemctl stop nginx -

Then we create the SSL certificates. You will need to do this for each server, so twice for our example:

$ letsencrypt certonly --standaloneOnce the SSL certificates are created. You should be able to find them in ./etc/letsencrypt/live/

-

We then need to update the file ./etc/nginx/sites-available/default to point at our new certificates

server { listen 80; listen [::]:80 default_server ipv6only=on; return 301 https://$host$request_uri; } server { listen 443; server_name alpha.glennjones.net; ssl on; # Use certificate and key provided by Let's Encrypt: ssl_certificate /etc/letsencrypt/live/alpha.glennjones.net/fullchain.pem; ssl_certificate_key /etc/letsencrypt/live/alpha.glennjones.net/privkey.pem; ssl_session_timeout 5m; ssl_protocols TLSv1 TLSv1.1 TLSv1.2; ssl_prefer_server_ciphers on; ssl_ciphers 'EECDH+AESGCM:EDH+AESGCM:AES256+EECDH:AES256+EDH'; location / { # Proxy_pass configuration proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for; proxy_set_header Host $http_host; proxy_set_header X-NginX-Proxy true; proxy_http_version 1.1; proxy_set_header Upgrade $http_upgrade; proxy_set_header Connection "upgrade"; proxy_max_temp_file_size 0; proxy_pass http://0.0.0.0:3000; proxy_redirect off; proxy_read_timeout 240s; } } server { listen 443; server_name beta.glennjones.net; ssl on; # Use certificate and key provided by Let's Encrypt: ssl_certificate /etc/letsencrypt/live/beta.glennjones.net/fullchain.pem; ssl_certificate_key /etc/letsencrypt/live/beta.glennjones.net/privkey.pem; ssl_session_timeout 5m; ssl_protocols TLSv1 TLSv1.1 TLSv1.2; ssl_prefer_server_ciphers on; ssl_ciphers 'EECDH+AESGCM:EDH+AESGCM:AES256+EECDH:AES256+EDH'; location / { # Proxy_pass configuration proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for; proxy_set_header Host $http_host; proxy_set_header X-NginX-Proxy true; proxy_http_version 1.1; proxy_set_header Upgrade $http_upgrade; proxy_set_header Connection "upgrade"; proxy_max_temp_file_size 0; proxy_pass http://0.0.0.0:3001; proxy_redirect off; proxy_read_timeout 240s; } } -

Finally let's restart Nginx:

$ systemctl restart nginxNginx should now proxy your domains and enforce SSL so https://alpha.glennjones.net and https://beta.glennjones.net would display the Hello world page of apps 1 and 2.

Letsencrypt auto renewal

Let’s Encrypt certificates are valid for 90 days, but it’s recommended that you renew the certificates every 60 days to allow a margin of error.

You can trigger a renewal by using the following command:

$ letsencrypt renew

To create an auto renewal

-

Edit the crontab, the following command will give editing options

$ crontab -e -

Add the following two lines

30 2 * * 1 /usr/bin/letsencrypt renew >> /var/log/le-renew.log 35 2 * * 1 /bin/systemctl reload nginx

Resources

Some useful posts on this subject I used